- Google evaluates content quality, not how it was produced

- 81.9% of top 20 ranking pages use AI assistance in some form

- Human content outperforms raw AI content, but edited AI content competes equally

- Sites get penalized for deception and scaled low-quality publishing, not for using AI

- AI cannot replicate first-hand experience, which is what modern E-E-A-T requires

- Never publish raw AI output. Always proofread, fact-check, and add your own insight

- YMYL niches require extra caution regardless of whether you use AI or not

81.9% of pages ranking in Google’s top 20 results contain some form of AI-assisted content. That number comes from Ahrefs, who ran it across 100,000 random keywords. Only 13.5% of those top-ranking pages were written entirely by humans.

So no, AI content is not bad for SEO. But that answer comes with real nuance, because the way you use AI determines whether it helps or quietly kills your rankings.

I’ve tested this across my own projects and client work, pulled data from Ahrefs, Neil Patel, and SEO.com, and combined it with what I see practitioners reporting in the field. Here’s the clearest picture I can give you of where AI content actually stands.

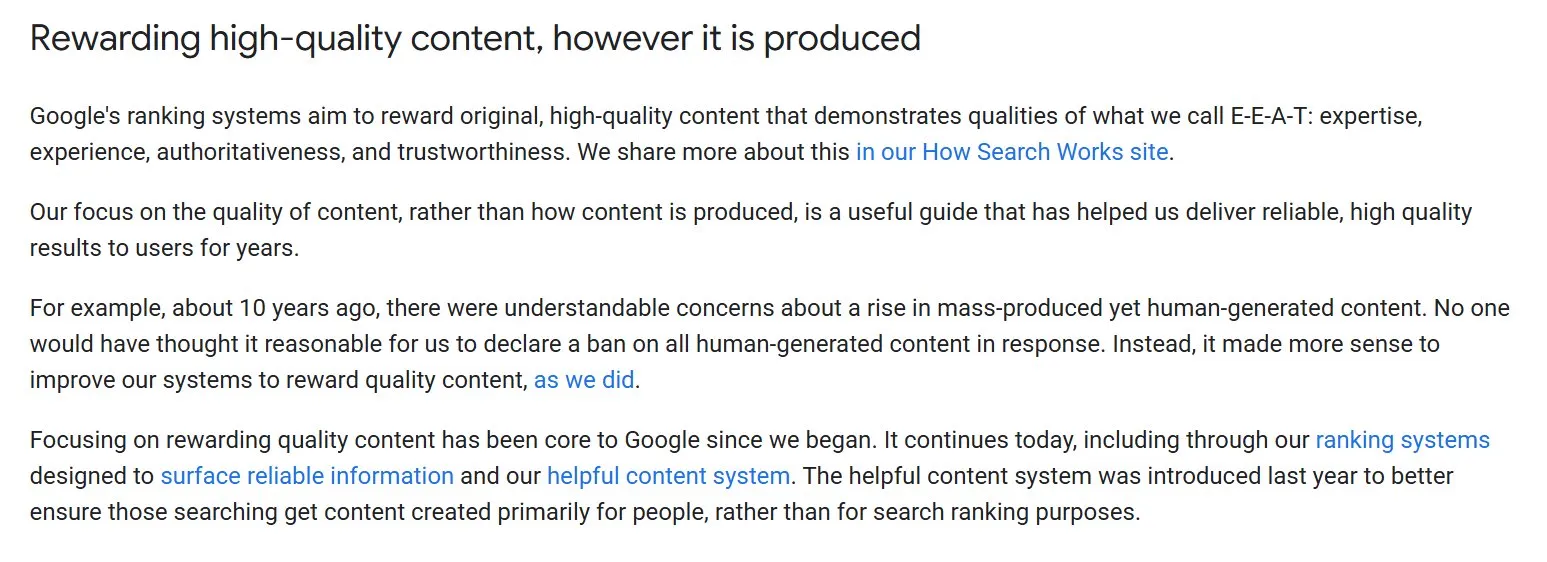

Google’s Stance on AI Content

Google has never penalized content based on how it was produced. Before AI writing tools existed, they talked about “automatically generated content,” and even then the policy was never about the method. It was always about the outcome.

Their spam guidelines say the same thing they’ve always said: content designed primarily to manipulate rankings is the problem, not automation itself.

Google themselves put it plainly in their Search Central guidance:

“Our focus on the quality of content, rather than how content is produced, is a useful guide that has helped us deliver reliable, high quality results to users for years.”

They even drew the comparison themselves. Ten years ago, mass-produced human content was the concern, and banning all human-written content in response would have been absurd. They improved their systems to reward quality instead. Their position on AI content follows the exact same logic.

What makes this even clearer is what Google does with its own products…

Does AI Content Actually Rank?

It does 100%, and the data backs it up. Ahrefs’ study found that only 4.6% of top-ranking pages were fully AI-generated, but 81.9% used AI to some degree, with most falling into the moderate-to-heavy assistance range. AI content is already embedded in what ranks.

Neil Patel’s team ran a more controlled experiment across 68 sites with 744 articles, split evenly between human and AI writers. Five months in, human articles averaged 283 visitors per month. AI articles averaged 52. Human content outranked AI content 94.12% of the time. Those numbers are worth paying attention to, but they don’t tell the whole story.

In my own testing across client sites, I haven’t seen that kind of gap. Both AI-assisted and human-written content have performed well, and sometimes the AI-assisted pieces actually outperformed.

What I’ve consistently found is that the performance difference isn’t really about whether AI was involved. It comes down to how much human input went into the final piece.

Raw AI output underperforms. Edited, experience-enriched, fact-checked AI content competes just fine.

Patel’s experiment likely reflects what happens when AI content skips that editorial layer, which is exactly what most people do. The gap in his data isn’t an AI problem. It’s a process problem.

Can 100% AI-generated content rank? Technically yes, the data shows 4.6% of top-ranking pages are fully AI-generated. But that number tells you it’s rare and not a smart strategy. And in my experience, it only ranks for short term. Without any human input holding it together, that content has no real foundation and tends to drop once Google’s systems catch up with it.

Publishing raw AI output with zero human editing is the fastest way to produce content that looks fine on the surface and performs poorly everywhere else. You’re removing the one thing that separates useful content from average content: a real person who knows the topic, verified the claims, and had something worth saying.

I would never publish a piece without going through it myself, and I wouldn’t recommend it to anyone I work with or not.

Where AI Content Falls Short

Accuracy and Hallucination Risk

AI tools fabricate information. Not occasionally, consistently. They produce statistics, citations, and factual claims that sound authoritative and are simply wrong. The errors don’t always announce themselves either. They get buried inside otherwise accurate paragraphs, which makes them easy to miss if you’re reading quickly.

Not verifying stats is one of the most common mistakes I see people make with AI content. You ask AI for a number, it gives you one, you publish it, and that number either doesn’t exist or came from a source that says something completely different. That’s a credibility problem that compounds over time, and once a reader catches it, they’re not coming back.

In YMYL categories especially, healthcare, finance, legal, a single wrong claim can have consequences that go well beyond a ranking drop. The hallucination risk alone is reason enough to fact-check everything AI produces before it goes anywhere near a publish button.

Lack of Originality

AI generates content by pulling patterns from existing web content. It synthesizes what’s already out there, which means it can’t produce anything genuinely new. If two competing sites use the same tool and prompt on the same topic, the output will be similar enough to create real problems.

Google has always had issues with duplicate and near-duplicate content regardless of how it was produced. AI just makes it easier to create that problem at scale.

The deeper issue is strategic. Content that adds no new perspective, data, or insight gives readers no reason to choose it over what’s already ranking. That’s not an AI problem specifically, it’s a quality problem. But AI accelerates it.

Experience-Based Niches

There are entire categories of content where AI simply cannot compete with genuine human expertise. I don’t think the gap between AI and human content will fully close when it comes to first-hand experience. But AI is good at writing. It is not good at replicating lived experience, real case studies, or the kind of emotional need that modern content increasingly requires.

A dating coach with fifteen years of real-world experience brings pattern recognition, personal experience, and judgment calls that no language model can replicate. AI will pull together generic advice from hundreds of similar pages and reassemble it into something readable but hollow.

The same applies to any niche where readers are making decisions that directly affect their lives. Medical advice, personal finance, mental health — these are areas where AI-assisted content without substantial human expertise layered on top will consistently underperform and, in YMYL categories specifically, poses risks that go well beyond SEO.

The Role of Prompting and Human Oversight

The single biggest variable in AI content quality is what you put in. A vague prompt produces generic output. Feeding an AI “write a blog post about SEO” will produce something that reads like every other surface-level article on the topic. The prompt needs to specify keywords, audience, structure, tone, and what sources to draw from. Give it nothing specific and that’s exactly what you’ll get back.

Beyond the prompt, not adding personal experience, not proofreading, and not verifying stats are the three things I see people skip most often. Those three steps alone separate content that builds authority from content that quietly drags a site down. AI output is a starting point. Without a real human pass, it stays a starting point.

Neil Patel’s team found that pruning low-quality AI posts produced an 11 to 12 percent traffic lift across their test sites. That result makes sense when you think about it. Every weak page you publish dilutes the overall quality signal your site sends. AI makes it easy to accumulate those pages fast without realizing the damage they’re doing.

This is also where E-E-A-T becomes a practical concern rather than just a guideline to nod at. AI cannot demonstrate Experience because it has none. It hasn’t run a campaign, lost a client, tested a hypothesis, or made a judgment call under pressure.

Expertise, Authoritativeness, and Trustworthiness all require a real person standing behind the content with verifiable knowledge and a track record. AI can help you structure and articulate those things, but it cannot generate them.

The interview step in my workflow exists specifically for this reason. When Claude asks me about my experience with a topic and I answer in detail, those answers contain the kind of signal that no prompt alone can produce.

How to Use AI Content for SEO the Right Way

My content process runs in four steps, and I’ve refined it to the point where it consistently produces content that ranks and reads like something a human actually cared about writing.

The first step is research. I copy the top three to five ranking articles on the topic and ask Claude to summarize each one separately in the same chat. I do each competitor separately so the summaries stay clean and distinct.

This is what I call training the AI on fresh, ranking data. Instead of pulling from its training data alone, it’s now working from the actual content that Google has already validated as relevant and useful for that topic.

The second step is the outline. Once the summaries are done, I prompt Claude to build a heading structure that follows natural semantic hierarchy, uses H3s only when a subtopic is genuinely distinct, and never creates headings just to label steps the H2 already describes.

The output is tight, logical, and covers the topic without padding. If you run the same prompt through ChatGPT you’ll get a different result. Each LLM has its own tendencies, and the outline quality reflects that.

The third step is the first draft. I use a detailed writing prompt that specifies tone, person, sentence structure, what phrases to avoid, and how the introduction should be framed. Because the research and outline were already done properly, the draft Claude produces is significantly better than what most people get when they skip those earlier steps.

I switched to Claude from ChatGPT after finding that GPT consistently gave me vague, thin output that needed heavy rewriting. Claude handles nuance and tone better, and the first drafts require less intervention.

The fourth step is the interview, and this is the one most people never do. I ask Claude to interview me about the topic, drawing out my opinions, experience, expertise, and any relevant case studies.

I answer those questions in detail, and that material gets woven into the article. This is what makes the content genuinely unique. No other site has my answers to those questions because no other site is me. This single step does more for E-E-A-T than anything else in the process.

After the interview, I proofread, remove buzzwords and filler, verify every stat, add internal links and external links, and add relevant images. Then it’s ready.

Want the exact prompts I use? I’ve put all 4 prompts from my content workflow into a free download. Copy and use them directly in Claude. [Download the Free Prompt PDF]

What Actually Gets Sites Penalized

The sites that have taken manual penalties for AI content were not penalized for using AI. They were penalized for deception and low-quality publishing at scale. Fake author bylines, fabricated bios, invented credentials, no editorial review, content pushed out so fast it couldn’t have been read by anyone.

I’ve seen this play out firsthand. One of my students got hit with a penalty, and when we looked at what happened, the AI had nothing to do with it. She copied the output, pasted it directly onto her site, and published without proofreading, without adding any first-hand experience, and without verifying a single claim.

The content was thin, generic, and offered nothing a reader couldn’t find on a dozen other pages. That’s what caused the problem. The AI was just the tool. The complete absence of human judgment was the actual issue.

Google’s scaled content abuse policy targets content produced at volume with no meaningful quality control. The pattern is consistent across every documented case. Sites spike their page count rapidly, traffic follows briefly, then the penalty hits.

Conflating that kind of abuse with simply using AI as part of a thoughtful workflow leads people to either fear AI unnecessarily or feel falsely confident that their bulk publishing strategy is fine. Neither serves them.

How Google Detects Low-Quality AI Content

Google can detect AI content. If tools like GPTZero or Originality can flag it, Google absolutely can. They are the largest LLM on earth with the most sophisticated engineering team on the planet. Assuming otherwise is optimistic at best.

The patterns they’re likely looking for are not hard to identify once you’ve read enough AI output. Formulaic structure with no clear editorial voice. Content that technically covers a topic but doesn’t actually help anyone. The same information rephrased across multiple sections. Filler openers like “in this article we’ll discuss” or “here’s the truth about.” Over-polished, generic writing with no original claim, no specific example, and no real point of view.

That said, Google cannot reliably detect AI content that has been genuinely edited, enriched with first-hand experience, and fact-checked. The detection problem isn’t really about identifying AI. It’s about identifying low effort.

Those two things overlap heavily because most people publish AI content without doing the work that makes it worth reading.

AI detectors like GPTZero and Originality are not reliable enough to use as a meaningful quality benchmark. I’ve never used one and I don’t recommend it. The question worth asking is not whether your content will pass a detector. It’s whether your content actually helps someone. If the answer is yes, the detection question stops mattering.

When to Be Careful With AI Content

There are pages I would never fully hand over to AI regardless of how good the prompt is.

Legal pages, privacy policies, and terms of service need to be accurate to the specific jurisdiction and business context. AI will produce something that sounds legally coherent and may be completely wrong for your situation.

Healthcare, finance, and any content that gives advice affecting someone’s health or money falls under YMYL. Google holds these pages to a higher standard and AI hallucination risk in these categories is not a theoretical concern, it’s a documented one.

If you’re a credible, established author with genuine expertise in these areas, you can use AI as an assist with thorough verification on top. For a new site or an author without established authority, the margin for error is much smaller and the consequences of getting it wrong are much larger.

About pages, mission statements, and anything that represents your personal or brand voice should come from you. AI can help you structure and refine them, but the substance needs to be yours. These are the pages that tell Google and your readers who you actually are, and no prompt can answer that for you.

Final Verdict

AI content is not bad for SEO. Publishing low-quality content at scale is bad for SEO, and AI makes that easier to do accidentally or intentionally. The tool is neutral. The strategy determines the outcome.

I use AI in every piece of content I produce. But I also train it on ranking data before I write, guide it through a structured outline, and use it to interview me so my experience and perspective actually make it into the article. Then I proofread everything and verify every claim.

That process takes more time than people expect, but the output is content that competes because it has something the purely AI-generated stuff doesn’t: a real point of view backed by real experience.

The sites winning with AI content treat it as one part of a process that still requires human judgment at every critical step. The sites getting penalized are the ones who figured out that AI can produce 50 articles in a day and decided that was the whole strategy.

It was never about human content versus AI. It’s about whether the content genuinely helps the person reading it. That standard existed long before AI and it’s not going anywhere.